Can Smart Cameras Improve Evacuations? A New Approach to Smarter Crowd Mapping

February 27, 2026

A new method for computational reconstruction of depth in sparse remote-sensing images to improve real-time crowd monitoring during disaster responses

Accurate crowd monitoring is crucial for guiding emergency evacuations. Non-repetitive scanning LiDAR systems are affordable and offer wide coverage, but their sparse and discontinuous depth data limit practical use. A study from Doshisha University introduces a color-guided depth completion method to reconstruct missing 3D information. The researchers also created a simulated dataset to support the evaluation of future depth reconstruction approaches.

Emergency evacuations during natural disasters like earthquakes and tsunamis increasingly rely on advanced technology to effectively assess real-time crowd movement and points of congestion. Disaster-preparedness involves the development of an optimized technology that is easy to use and interpret.

A remote sensing technology, LiDAR, short for light detection and ranging, uses laser pulses to detect congestion or crowd density. The laser scans human targets and measures their distance based on the time taken for the reflected laser to bounce off the target and return. The reflected laser beams together create a 3D representation or point cloud image of the target.

Non-repetitive scanning LiDAR is an affordable version with a wide field of view compared to conventional high-density LiDAR systems, making it attractive for large-scale use in public spaces. However, the very advantage of wider coverage and low-power imaging results in sparse and irregular measurements. The retrieved images lack depth and continuity, limiting the practical utility of this system in reporting real-time crowd flow.

To address this critical gap, doctoral student Zixuan Zhang from the Graduate School of Science and Engineering, Doshisha University, Japan, worked with senior colleagues Professor Nobutaka Tsujiuchi and Professor Akihito Ito from the Faculty of Science and Engineering, Doshisha University, and Professor Hirosuke Horii from the Faculty of Science and Engineering, Kokushikan University, to develop an improved color-guided depth completion method specifically designed for LiDAR images. This study was made available online on February 5, 2026, and was published in Volume 36 of the Transportation Research Interdisciplinary Perspectives journal on March 01, 2026.

Explaining their motivation, Zhang, the lead author of the study, says, “This research originated from our work on dynamic evacuation guidance systems, where accurate real-time observation of crowd movement is critical. We hope to enhance evacuation safety through a reliable reconstruction of depth in human images generated by non-repetitive scanning LiDAR under realistic sensing constraints.”

In this study, researchers developed an RGB (red, green, blue) color-guided depth recovery method specific to the unique sampling features of non-repetitive LiDAR. The method combines computational techniques like confidence-aware bilateral filtering and masked reconstruction. It also uses a self-consistent parameter optimization strategy that can function without dense actual-depth data. The combination of these steps allows the method to reconstruct continuous depth structures while preventing faulty over-smoothing and overfilling of gaps in the images.

A key highlight of this study is the creation of a simulated dataset mimicking the sparse and irregular non-repetitive scanning LiDAR measurements. Zhang explains, “Until now, the lack of publicly available datasets that replicate the irregular sampling characteristics of non-repetitive scanning LiDAR, together with corresponding dense ground-truth depth maps and color images, has posed a major challenge for the accurate evaluation of depth recovery methods.” In this study, the authors used a 3D human motion dataset for dense true-depth images and paired color images. Next, they mimicked the LiDAR rotation and scanning method to generate sparse and irregular images from the true-depth images.

The proposed method was tested on 30,000 simulated samples, and the results demonstrate improved depth accuracy and structural consistency compared to conventional filtering methods. The depth recovery framework could reconstruct coherent human silhouettes and structural depth patterns, and the lightweight computational framework makes it suitable for disaster-response applications.

However, the authors caution that reliance on color images makes the accuracy of depth recovery sensitive to changes in lighting, saturation, motion blur, or misalignment. The method would need to be evaluated against real-world data before drawing any firm conclusions regarding its superior efficiency in emergency evacuation guidance systems. “As LiDAR sensors become more widely integrated into smart city infrastructure, robust depth reconstruction methods will become essential for public safety systems. Our research sets the foundation for the implementation of next-generation situational awareness systems in urban environments,” explains Zhang.

Overall, this new method paints a fuller picture by improving the accuracy of depth perception in LiDAR images, thus advancing the reliability of crowd assessment for disaster management.

Computational depth-recovery of sparse images from non-repetitive LiDAR to enhance the accuracy of crowd-monitoring

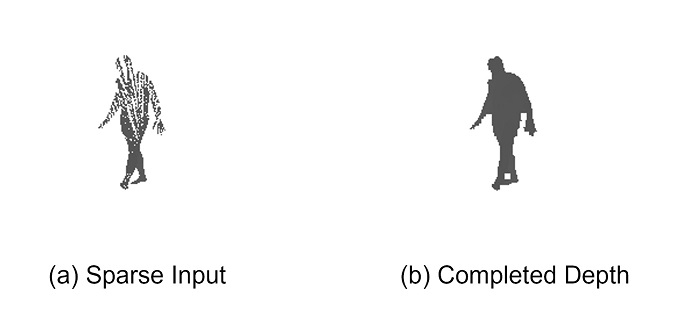

Sparse and irregular depth measurements from non-repetitive LiDAR (left) and the reconstructed dense depth map using the proposed RGB (red, green, blue)-guided completion framework (right).

Image Credit: Zixuan Zhang from Doshisha University, Japan

Image license type:Original content

License restriction: Credit must be given to the creator.

Reference

| Title of original paper | RGB-guided sparse depth completion for non-repetitive scanning LiDAR in crowd dynamics analysis |

| Journal | Transportation Research Interdisciplinary Perspectives |

| DOI | 10.1016/j.trip.2026.101879 |

Funding information

This work was supported by JSPS KAKENHI (grant number: JP22K04639)

EurekAlert!

https://www.eurekalert.org/news-releases/1117884

Profile

ZHANG Zixuan

Zixuan Zhang is a doctoral student at the Graduate School of Science and Engineering, Doshisha University. Zhang earned Master of Engineering degree in Mechanical Engineering from Kokushikan University, Tokyo, in 2023. Since enrolling in the doctoral program the same year, Zhang has focused on intelligent transportation systems, LiDAR sensing technologies, and crowd dynamics analysis, with particular interest in improving depth perception and situational awareness for disaster-response applications.

Ph.D. Student, Graduate School of Science and Engineering

TSUJIUCHI Nobutaka

Professor Nobutaka Tsujiuchi is a distinguished expert in mechanical dynamics, vibration control, and human-machine interactions. His research focuses on the dynamic behavior of mechanical systems, advanced vibration and noise mitigation techniques, robotic motion control, and the integration of human ergonomics into intelligent machinery. At Doshisha University’s Department of Mechanical Systems Engineering, he leads research that bridges theoretical dynamics with practical applications, including sensor-integrated systems and control strategies for complex mechanical environments. He has also contributed to professional engineering societies and led multiple interdisciplinary projects aimed at improving machine-human interactions and the dynamic performance of mechanical systems.

Professor,

Faculty of Science and Engineering,

Department of Mechanical Systems Engineering

ITO Akihito

Professor Akihito Ito is a faculty member in the Department of Mechanical Science and Engineering at Doshisha University. His research interests include robotics, tactile information processing, mechanical dynamics, and control systems. Professor Ito’s work focuses on enhancing robotic system performance, motion control, and the integration of sensory feedback for improved robot interaction and stability. With extensive experience in both academic research and practical engineering applications, he has contributed to the development of advanced robotic platforms and control algorithms that support dynamic system coordination.

Professor,

Faculty of Science and Engineering,

Department of Mechanical Systems Engineering

Media contact

Organization for Research Initiatives & Development

Doshisha University

Kyotanabe, Kyoto 610-0394, JAPAN

CONTACT US